OneDrive’s Poison Setting - Fri, 8 May 2020

OneDrive’s default setting of no limit for network upload and download rates has caused years of Internet problems at my house. Unbeknownst to us, it would from time to time consume most or all of the Internet bandwidth affecting me when on my ethernet connected desktop computer and affecting everyone else in my house connected with their devices to our Wi-fi. It is now obvious to me that this hogging of bandwidth happened following any significant upload of pictures or files from my desktop computer to OneDrive and the effect sometimes lasted for days!

Yikes! I’m flabbergasted at how we finally discovered the reason behind our Internet connection problems. A number of times in the past few years, we’ve found the Wi-fi and TV in the house to be spotty. We had got used to unplugging the power on the company-supplied modem and waiting the 3 or 4 minutes for it to reset. Often that seemed to improve things, or maybe the reset just made us feel it had done so – we don’t really know. We’ve called our supplier several times, and they came over, inspected our lines, checked our modem. In all cases, the problem repaired itself, if not immediately, then over the course of a few days.

It didn’t get really bad too often. But it did about 2 months ago, just after my wife and I got back from a wonderful Caribbean cruise (which we followed up with 2 weeks of just-in-case self-isolation at home). I had to replace my computer, and very shortly after the new one was installed, we had several days of Internet/TV problems.

I called my service provider (BellMTS) and I told them about the poor service we were having and they tried to help over the phone. We rebooted the modem several times but that wasn’t helping.

They sent a serviceman to check the wiring from our house to the distribution boxes on our block. We thought that might have helped and it was not long after that it seemed everything was pretty good.

We had very few problems over the next 6 weeks, but just last night, I was in the middle of an Association of Professional Genealogists Zoom webinar (Mary Kircher Roddy – Bagging a Live One; Reverse Genealogy in Action), when suddenly I lost my Internet in my other windows and my family lost the Internet on their devices. Our TV was even glitching. However the Zoom webinar continued on uninterrupted. I could not at all figure this out.

After the webinar ended, I called my Internet/TV provider and things seemed to improve. The next morning, the troubles reoccurred. I called my provider again. They sent a serviceman. He came into the house (respecting social distancing) and cut the cable at our box so they could test the wiring leading to our house. He was away for over an hour doing that. When he came back, they had set up some sort of new connectors. He reconnected us. But no, we still had the problem. He then found what he though was a poorly wired cable at the back of the modem. He fixed that, but still the problem. Then he replaced our modem and the power supply and the cabling. Still the problem.

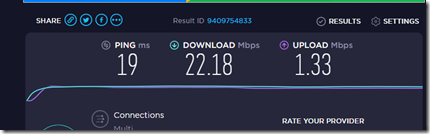

We were monitoring the problem using speedtest.net. We’ve got what’s called the Fibe 25 plan**. We should be getting up to 25 Mbps (mega-bits per second) download and up to 3 Mbps upload. We were getting between 1 and 2 download and 1 upload. Not good.

After several more attempted resets and diagnostic checks, we were now 3 hours into this service call. The serviceman’s next idea was the one that worked. He said turn off all devices connected to the Internet. Then turn them on one-by-one and we might find it is a device we have that’s causing the problem. We did so and when we got to my ethernet connected computer, it was the one slowing everything. The serviceman said there it is, found the reason. He couldn’t stay any more and left.

I checked and sure enough, when my computer was on, we got almost no Internet, but when it was off, everything was fine. Here was the speed test with my computer off:

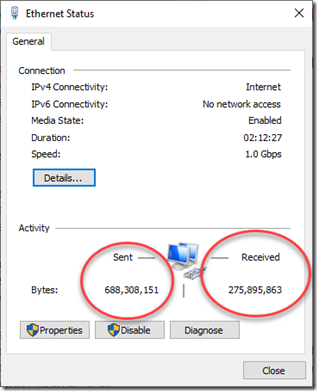

When I went to the network settings to see if it was a problem with my ethernet cable, I could see a large amount of Activity, with the Sent and Received values changing quite quickly:

My first thought was that maybe my computer was hacked. I opened Task Manager and sorted by the Network column to see what was causing all the Network traffic. There was my answer, in number 1 place consuming the vast majority of my network was: Microsoft OneDrive.

My older daughter immediately commented that she had long ago stopped using the free 1 TB of OneDrive space we each get by being Microsoft 365 subscribers because she found it hogged all her resources.

Eureka! 2 months ago what had I done? I had uploaded all my pictures and videos from our trip to OneDrive. And what was I doing while watching that Zoom webinar last night? I was uploading several folders of pictures and videos to OneDrive. What wasn’t I doing during the 6 weeks in-between was any significant uploads to OneDrive.

In Task Manager, I ended the OneDrive task. Sure enough my download speed from speed test went back up to good numbers, and our Internet/TV problem had finally been isolated.

It didn’t take me long to search the Internet to find that OneDrive had network settings. The default was (horrors) a couple of “Don’t limit” settings. The “Limit to” boxes, which were not selected, both had suggested defaults of 125 KB/s (kilobytes per second). I did some calculations and selected them and set the upload value to 100 KB/s and left the download value at 125 KB/s:

Note that these are in KB/s whereas Speedtest gives Mbps. The former is thousands of bytes and the latter is millions of bits. There are 8 bits in a byte. So 125 KB/s = 1.0 Mbps, which is about 4% of my 25 Mbps download capacity and 100 KB/s = 0.8 Mbps which is less than 30% of my upload capacity. Now when OneDrive is synching, there should be plenty left for everyone else. Yes, OneDrive will take several times longer to upload now. But I and my family should no longer have it affecting our Internet and TV in a significant way any more.

Also notice there’s an “Adjust automatically” setting. Maybe that is the one to choose, but unfortunately they don’t also have that setting on the Download rate, which is maybe more important.

My wife and daughters have complained to me for a number of years claiming my computer was slowing the Internet. Up to now, I did not see how that could be. Yes, as it turns out, it was technically coming from my computer, but the culprit in fact was OneDrive’s poison setting. I am someone who turns off my desktop computer when I am not using it and also every night I don’t have it working on anything. No wonder our problems were spotty. When my computer was off, OneDrive could not take over. So my family was right all along.

Well that’s now fixed. I will let my TV/Internet provider know about this so that they can save their time and their customers time when someone else has a similar intermittent internet problem which may be OneDrive. I will also let Microsoft know through their feedback form and hopefully they one day will decide to either change their default network traffic settings to something that would not affect the capacity of most home Internet providers, or change the algorithm so that “unlimited” has a lower priority than all other network activity. Maybe that “adjust automatically” setting is the magic algorithm. If so, it could be the default but it should also be added as an option on the Download rate, to eliminate OneDrive’s greediness.

Are you listening Microsoft?

And I’d recommend anyone who uses OneDrive to check out if you have no limit on your OneDrive Network settings. If you do, change them and you might see the speed and reliability of your Internet improve dramatically.

—-

**Note: The Fibe 25 plan is the maximum now available from BellMTS in our neighborhood. They are currently (and I mean currently since my front lawn is all marked up) installing fiber lines in our neighborhood that will allow much higher capacity. Once installed, I should have access to their faster plans, and will likely subscribe to their Fibe 500 plan for only $20 more per month. That will give up to 500 Mbps download (20x faster) and 500 Mbps upload (167x faster). They have even faster plans, but that should be enough because our wi-fi is 20 MHz which is only capable of 450 Mbps. My ethernet cable (which was hardwired in from the TV downstairs to my upstairs office when we built the house 34 years ago) is capable of 1.0 Gbps which is 1000 Mbps. Once we switch plans, I’ll likely give OneDrive higher limits (maybe 100 Mbps both ways) and it will be a new world for us at home on the Internet.

Feedspot 100 Best Genealogy Blogs

Feedspot 100 Best Genealogy Blogs